GitHub Launches Stacked PRs as a First-Party Workflow

GitHub shipped Stacked PRs in private preview this week — a first-party CLI and UI for managing stacked pull requests. The workflow formalizes a pattern that was previously only practical for teams with dedicated internal tooling.

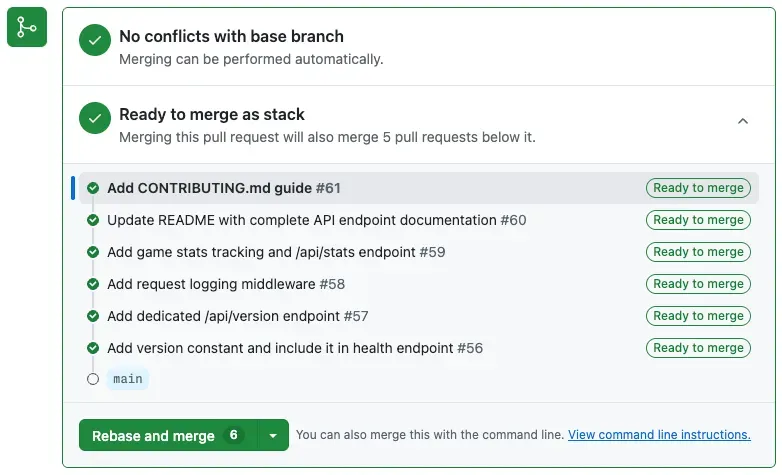

GitHub recently shipped Stacked PRs, a first-party CLI and integrated UI for managing stacked pull requests. It also comes equipped with a set of agentic skills (which is becoming a table-stakes feature, from this point on) to teach your AI coding agents how to use them. A stack is a chain of dependent PRs where each targets the branch of the one below it, rather than directly targeting main — breaking a large change into sequentially reviewable slices. The tooling handles what has historically made this pattern painful: cascading rebases across dependent branches, merge sequencing, and consistent enforcement of branch protections across the chain.

What does it do?

The concept is straightforward: the "bottom" PR targets the main branch, and each subsequent PR targets the one beneath it. What makes this workable at scale is how GitHub evaluates protections: Each individual PR in the stack is assessed against the main branch rather than its immediate parent, so CI and branch rules apply consistently across the whole chain rather than only at the base.

All PRs in a stack are evaluated for rules and protections using their final target branch (e.g., main), not the branch they directly target.

The newly introduced gh stack CLI manages the local workflow (init, rebase, push, submit, checkout), and a Stack Map UI in GitHub gives reviewers a visual navigator showing each PR's status across your whole chain.

Why should I care?

For engineers (or vibe coders alike) working on large features or refactors that span multiple layers, stacking lets you naturally decompose the work without sacrificing review discipline. You can route schema changes to the infra reviewer, the API layer to the backend team, and the UI to whoever owns that. All of these rounds of feedback can occur in parallel rather than waiting for a monolithic PR to clear a tangled reviewer queue.

GitHub Stacked PRs aren't fully GA yet, so you'll need to apply for access. They expect this to be enabled for repositories in an organization. The lonely vibecoder will have to wait.

Last thought

At Google, some version of this workflow was already common practice. We were heavily encouraged to break large changes into dependent, sequentially reviewable layers through an internal code review doctrine known as "Small CLs." (CL = changelist, same as a PR, really; Google's old codebase traces of Perforce from a bygone era).

The rationale behind Small CLs was straightforward: reduce the cognitive overhead a human experiences on a complex project and prevent the rubber-stamping of bulk changes. However, with the advent of agentic coding tools that regularly spit out large reams of code, does this concept still hold for a reviewer who may also be AI?

The longer-term trend worth watching: does the "keep PRs small" rule survive the transition to AI-assisted (or even AI-led) review?

This doctrine was intended for human reviewers, taking into account cognitive load, limited context, and reviewer fatigue. AI-powered code review agents don't share those constraints. In fact, a massive coherent diff may give an AI reviewer more to work with, potentially delivering better feedback than an artificially decomposed slice.

I've written about this topic on LinkedIn, and it's become one of the most contentious things I've posted on that platform. Many engineers are clearly still grappling with this fundamental shift in how they work. My own evaluations of agentic code reviewers over large PRs have largely trended very positive. I've run as many as 30 rounds of feedback between Opus and GitHub Copilot, and I've been consistently impressed with how well it can evaluate and identify real needles in that large of a haystack.

On the other hand, GitHub's Stacked PRs could enable new workflows. For example, human reviewers on the layers they must own, and AI agents running across the full stack simultaneously. But the question of what size any given PR should be will likely become a term of art more than a settled science.