The Necessary Evolution of "Research, Plan, Implement" as an Agentic Practice in 2026

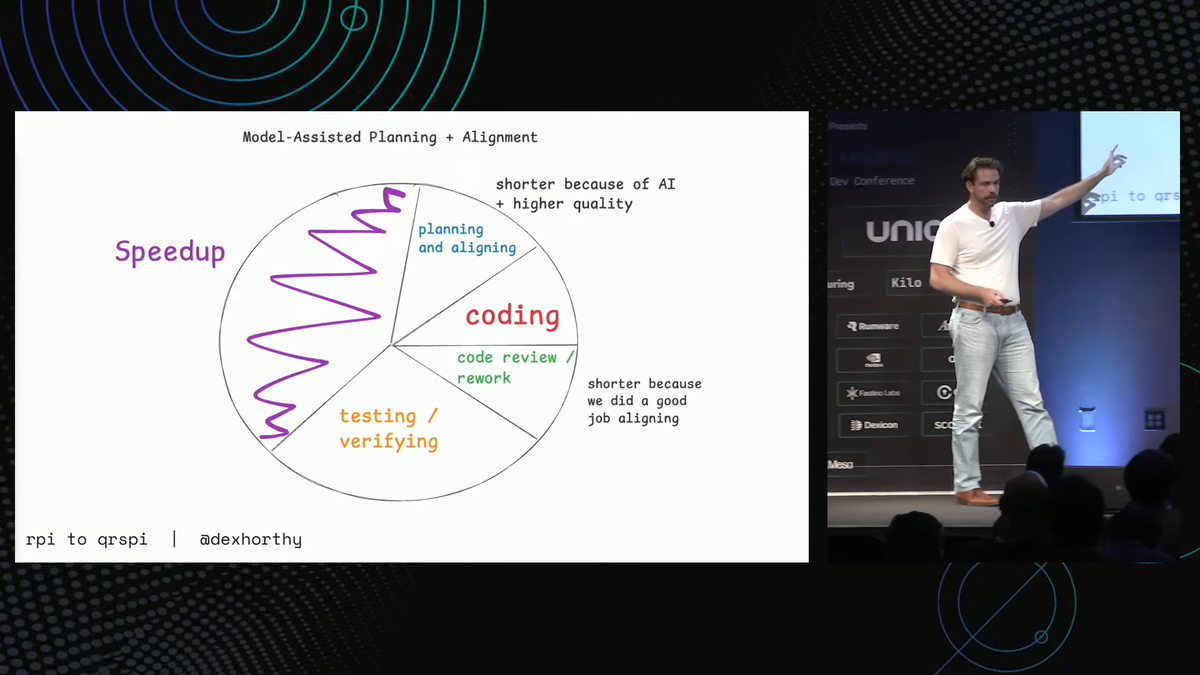

"Vibe coding" gave way to RPI, and now RPI is evolving into something more rigorous. Dexter Horthy's QRSPI breaks down where the original Research, Plan, Implement pattern fails in the real world and shows what a more disciplined agentic workflow looks like in 2026.

Dexter Horthy recently spoke at the Coding Agents 2026 conference in Mountain View, CA. His talk caught my attention because it clearly articulated an experience I initially struggled with on my agentic coding learning path.

As agentic coding harnesses like Claude Code and OpenCode have become popular, numerous "best" practices and methodologies have emerged to improve the success rate of these longer-running agentic coding tasks. One of the most commonly advocated (and arguably most intuitive) approaches is known as "Research, Plan, Implement" (RPI).

RPI in a nutshell

The core idea is that most people just throw a task at an AI and hope it figures things out (Andrej Karpathy names this practice "Vibe Coding" in Feb 2025). RPI instead breaks the agent's work into deliberate phases: it starts by researching the relevant code, files, and context (reading tickets, tracing data flows, using specialized sub-agents to explore different parts of the codebase in parallel); then it plans by synthesizing everything it learned into an objective, factual understanding and producing a structured plan document; and only then does it implement.

Critically, the research phase is kept strictly objective: no opinions, no jumping ahead to implementation details. Dexter frames this as the "compression of truth"; the agent is distilling reality rather than guessing.

What went wrong?

The most fundamental issue was the research step. On paper, RPI's research phase was meant to produce a clear, factual understanding of the codebase before any planning began. However, in practice, it involved a single subagent handling a broad, open-ended prompt: "Tell me how endpoint registration works, trace the logic flow, explain the workers that handle reticulation." Even worse, that one subagent was using roughly 40% of the context window just to get oriented. In Claude Code, the /create_plan command does too much with a single large prompt. That's a lot of budget spent before the real work even starts, and the output wasn't always reliable enough to justify it.

Moreover, the model responses may decide to skip steps in the plan created by that large prompt. It may do this opaquely, without warning the operator. In practice, the agent could miss the most critical alignment moments—the parts where it presents options and checks its understanding—without noticing until the plan was already incorrect.

Ultimately, this results in poor plans based on inadequate research. The irony is that RPI's philosophy is spot on: keep things objective, compress truth, and avoid outsourcing thinking. The problem wasn't the philosophy; it was that the mechanism for conducting research wasn't good enough to support it.

QRSPI introduces guardrails

Dexter's talk goes into the details of this approach, and I highly recommend you watch the video to see it in context. Here's the gist:

The core shift is breaking that single mega-prompt into genuine, separate agentic steps. This improves the likelihood that nothing can be quietly skipped. QRSPI stands for Questioning, Research, Structure, Plan, Implement, and the new letters are where all the interesting changes live.

Questioning comes first now, and it's a meaningful addition. Rather than firing a single broad research prompt and hoping the agent figures out the right path, QRSPI kicks off with structured multi-agent questioning: the agent surfaces explicit design decisions as options (Q1: Option A, B, or C?) and works through them before any research begins in earnest.

Structure is the other new addition, and it directly addresses the "sometimes skipped" problem. Dexter makes a clean distinction on one slide: Design equals where we're going. Structure Outline equals how we get there. In RPI, the step of getting feedback on the plan's structure before writing the full plan was optional and frequently dropped. In QRSPI, it's a first-class, mandatory step and forces the agent to produce a phased breakdown.

How I'm practicing this today

If you haven't heard of Obra's superpowers plugin for Claude Code, you should really check it out. It comes with a stock skill called "brainstorming," which does a fantastic job of collaboratively evolving and questioning concepts. I regularly use it, and as a thought partner, I've had a lot of success with it.

When it comes to structure, this is something Travis Corrgian (Principal collaborator along with me at Limbic Systems, our consultancy group) and I are actively tinkering with. Realistically, it remains essential that we balance context window bloat and encourage agents to engage with large-scale plans as close to just-in-time as possible. One ritual I've been seeing good results from is forcing the agents to write after-action reports in retrospective style. Specifically, I ask them to summarize the following:

What went well in this phase?

What went wrong in this phase?

What lessons should we carry forward into subsequent phases, and how?

This has been yielding some fascinating iterative improvements. In some cases, I've seen it suggest refinements or improvements to project-scoped Agent Skills, helping it close gaps for future iterations.

Travis and I intend to publicly contribute a workflow plugin that reflects our collective taste and battle-hardened opinions on structure, but we're not ready to share more on that today.

What's next?

Watch Dexter's whole presentation here; it's worth your time.

Watch Dexter's presentation here in its entirety.